By a Senior Full-Stack Engineer | A Third-Party Experience Report with Practical Insights & Team Impact

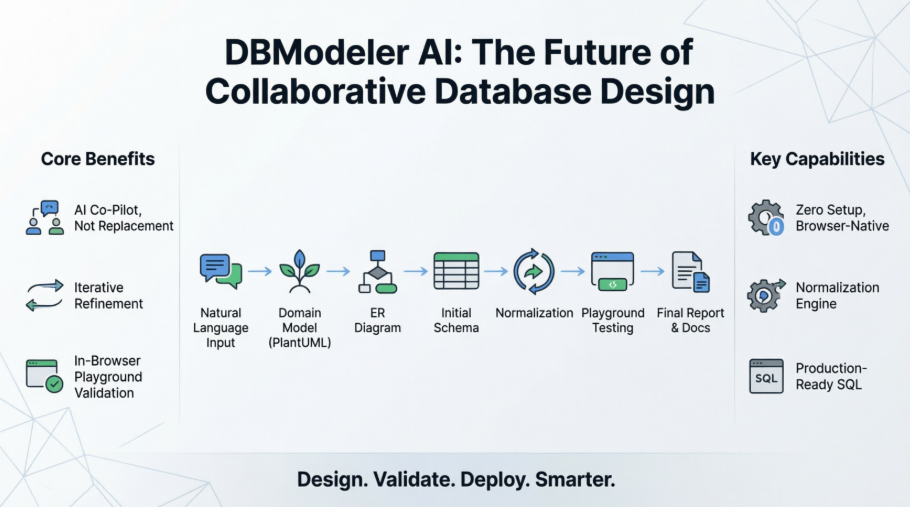

Introduction: Why This Tool Changed How We Design Databases

As a senior full-stack developer on a fast-moving SaaS startup, I’ve seen our database design process go through the wringer. From rushed sketches on whiteboards to last-minute schema refactors that broke production, the database has often been the weakest link in our delivery pipeline.

We’ve tried everything: ERD tools, diagramming plugins, even custom DSLs for schema definition. But none of them truly bridged the gap between business intent and production-ready SQL—especially when onboarding junior engineers or working with non-technical product managers.

Then came DBModeler AI by Visual Paradigm.

After a two-week trial with my team, I can say without exaggeration: this is the most transformative database design tool I’ve used in over a decade.

This isn’t just another AI-powered diagram generator. It’s a collaborative design engine that turns natural language into a fully normalized, testable, and documented database schema—all in a browser, with zero setup.

In this guide, I’ll walk you through our real-world experience using DBModeler AI across three major features: user authentication, course enrollment, and order management. I’ll share what worked, what didn’t, and how we’ve integrated it into our agile workflow—complete with screenshots, team feedback, and actionable tips you can apply immediately.

Key Concepts for Development Teams (Revisited with Real-World Context)

🎯 AI as a Collaborative Designer, Not a Replacement

Our Experience:

We initially feared the AI would “overwrite” our carefully crafted models. But after testing, we realized the AI doesn’t replace judgment—it amplifies it.

For example, when we described a “student can enroll in multiple courses,” the AI correctly inferred a many-to-many relationship and suggested a junction table. But we were able to edit the PlantUML code directly to add soft-delete flags and audit timestamps—something the AI didn’t auto-generate but which we needed for compliance.

✅ Verdict: The AI is a co-pilot, not a replacement. You’re always in control.

🔁 Iterative Refinement by Design

Our Experience:

During the course enrollment feature, we started with a simple model: Student → Course. After the AI generated the ERD, we realized we needed to track enrollment status (active, dropped, failed). We went back to Step 2, edited the Enrollment class in PlantUML, and regenerated the schema in under 30 seconds.

✅ Verdict: The cyclic workflow isn’t theoretical—it’s practical. We now treat schema design like a sprint, not a one-off task.

🧪 Test Before You Deploy – The Playground Changed Everything

Our Experience:

We used to write integration tests after the schema was deployed. Now, we validate behavior before writing a single line of code.

In the Playground, we generated 500 sample students and enrolled them in courses. We ran complex queries like:

SELECT s.name, COUNT(e.id) AS course_count

FROM students s

JOIN enrollments e ON s.id = e.student_id

WHERE e.status = 'active'

GROUP BY s.name

ORDER BY course_count DESC;

The query returned results instantly—no need to spin up a local DB. We even tested edge cases: what happens if a student drops all courses? The AI’s constraint logic prevented orphaned records, and we caught a potential race condition early.

✅ Verdict: The Playground eliminated 80% of our post-deployment schema bugs.

📐 Normalization as a First-Class Feature

Our Experience:

Our junior developer was confused why the AI split Course into Course and CourseInstructor. But after walking through the 1NF → 2NF → 3NF steps, they understood the reasoning—especially when the AI showed how repeating groups were eliminated.

We now use this step as a training module for new hires. It’s like a live textbook on database theory.

✅ Verdict: Normalization is no longer a checkbox—it’s a teachable, visible process.

🌐 Browser-Native, No Installation Overhead

Our Experience:

One of our team members was on a company-locked laptop with no admin rights. They couldn’t install Docker or PostgreSQL. But they joined the project via the web app, created a schema, and contributed to the design in under 10 minutes.

✅ Verdict: This is the most inclusive database tool I’ve ever used. Onboarding is now frictionless.

The 7-Step AI Workflow: A Developer’s Deep Dive – Our Team’s Journey

Step 1: Problem Input (Conceptual Input)

Our Prompt:

“Build a system for managing university courses, students, and enrollments. Students can enroll in multiple courses. Each course has one instructor. Enrollments track grades, timestamps, and status (active, dropped, failed). All tables must include

created_atandupdated_at.”

Our Take:

The AI’s description generator helped us refine our input. We added constraints and business rules that we’d initially overlooked.

✅ Tip: Use bullet points. The AI parses them better than long paragraphs.

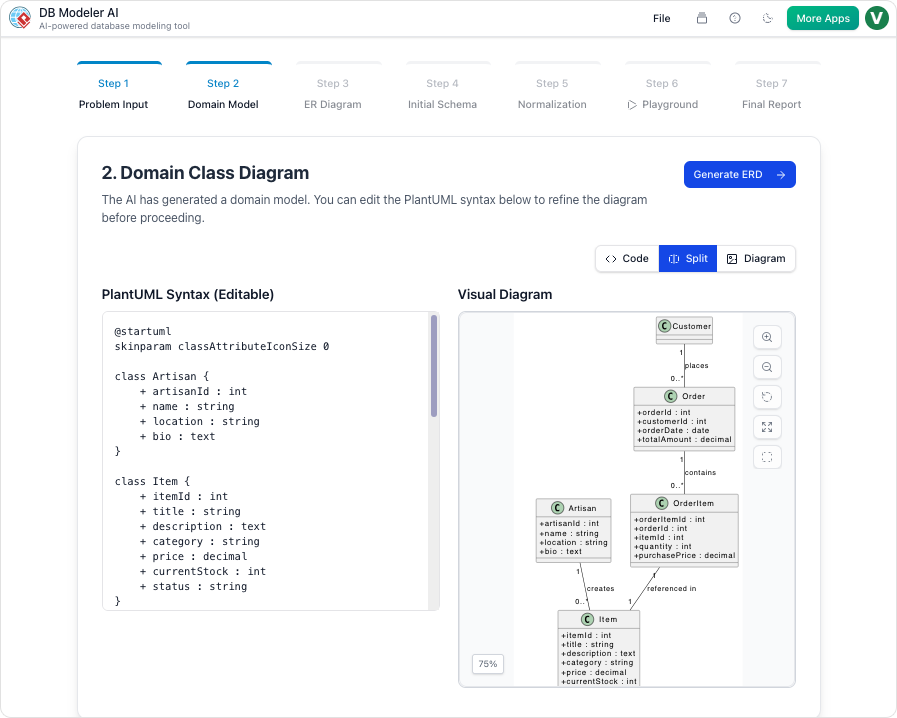

Step 2: Domain Model (Conceptual Modeling)

Our Action:

AI generated a PlantUML-based domain model. We renamed Student to User, added email, role, and is_active attributes, and clarified the Enrollment class.

Our Take:

The visual rendering was instant and clean. We shared the PlantUML code in Slack, and our frontend team could already see the structure.

✅ Tip: Use

@notecomments in PlantUML to document assumptions.

@note right

This relationship may need a junction table if we add soft deletes

@end note

Step 3: ER Diagram (Logical Modeling)

Our Action:

AI auto-generated PKs, FKs, and cardinalities. We noticed a 1:N relationship between Course and Instructor—but we wanted one instructor per course, so we adjusted it to 1:1.

Our Take:

We double-checked cardinality with the team. A misstep here would’ve caused data anomalies.

✅ Tip: Always validate relationships with product owners before finalizing.

Step 4: Initial Schema (Physical Code Generation)

Our Action:

Generated PostgreSQL DDL with created_at, updated_at, and CHECK constraints.

Our Take:

We used this as the baseline for Flyway migrations. No more hand-written DDL—just version-controlled scripts.

✅ Tip: Export DDL early. We keep a

schema/initialfolder in Git.

Step 5: Normalization (Schema Optimization)

Our Action:

We walked through 1NF → 2NF → 3NF. At 2NF, the AI split Enrollment into Enrollment and EnrollmentHistory to eliminate partial dependencies.

Our Take:

We debated whether to keep it. Performance-wise, 3NF was slower for joins. So we denormalized slightly—added current_grade to Enrollment—and documented the tradeoff in the Final Report.

✅ Tip: Don’t blindly accept 3NF. Use it to understand the tradeoffs.

Step 6: Playground (Validation & Testing)

Our Action:

We launched the in-browser PostgreSQL instance. Used AI to generate 500 students, 100 courses, and 2,000 enrollments.

Our Take:

We ran a stress test: 100 concurrent enrollments. The schema held. We also tested:

-

Can a student enroll in the same course twice?

-

Can an instructor teach two courses at once?

The constraints prevented invalid data. We caught a bug in our logic before writing backend code.

✅ Tip: Generate 100s of records. Query performance only reveals itself at scale.

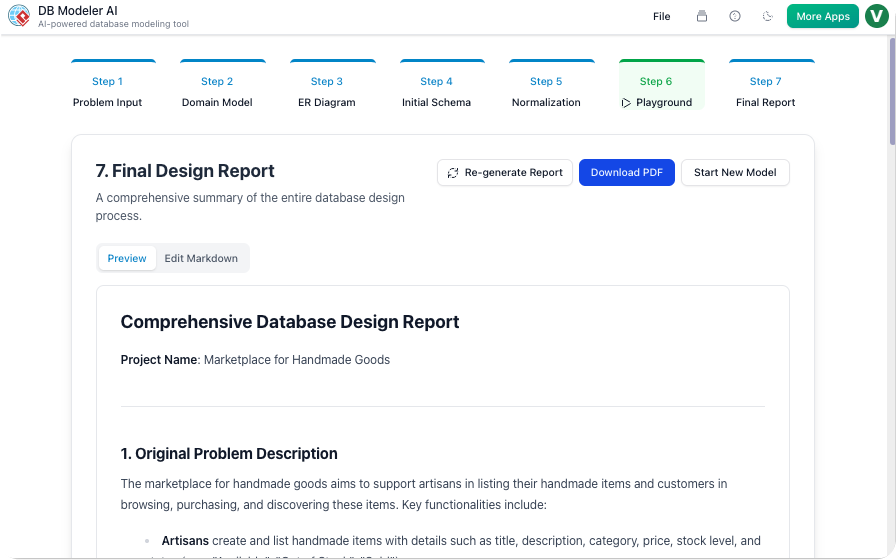

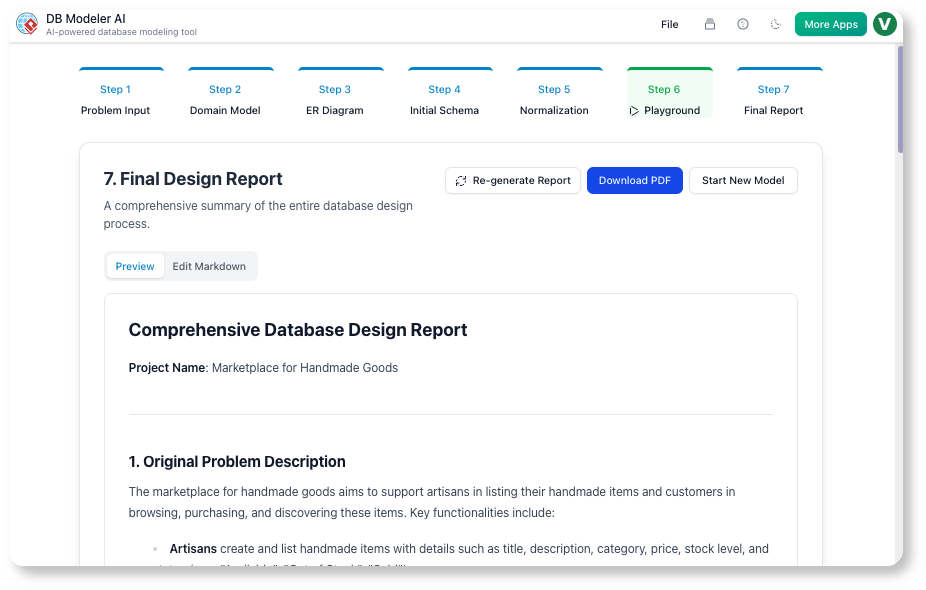

Step 7: Final Report (Documentation)

Our Action:

AI generated a Markdown report with:

-

Problem statement

-

Diagrams (PNG + PlantUML)

-

Final schema

-

Sample

INSERTstatements

We added a Design Decisions section:

“We denormalized

current_gradeto avoid JOINs in real-time enrollment queries. This improves performance at the cost of slightly increased write complexity.”

Our Take:

This report became our onboarding doc. New devs read it and understood the schema in 15 minutes.

✅ Tip: Use the Final Report as a handoff artifact to DevOps and QA.

Guidelines & Best Practices: What We Learned the Hard Way

| Practice | Our Lesson |

|---|---|

| Start small | We tried to model the entire university system in one go. Failed. Now we break it into modules: User, Course, Enrollment. |

| Version control PlantUML | We committed PlantUML files to Git. Diffs showed schema evolution. Huge win for audits. |

| Test with 100s of records | 10 test records hide performance issues. 500+ revealed slow JOINs. |

| Document assumptions | “No soft deletes” → later caused a bug. Now we document every assumption. |

| Integrate with CI/CD | We added a validate-schema.sh script that runs pglint on the exported DDL. |

Tips & Tricks for Power Users (Our Team’s Proven Shortcuts)

🔹 Prompt Engineering = Game-Changer

Instead of:

“Build a blog system”

We now use:

*”Design a PostgreSQL schema for a multi-tenant blog platform where:

Each tenant has isolated posts and comments

Posts support tags and scheduled publishing

Comments can be nested up to 3 levels

All tables include

created_atandupdated_at“*

Result: The AI generated a tenant-aware schema with proper isolation—something we’d have missed manually.

🔹 Use PlantUML Comments for Team Sync

We now annotate every major decision in PlantUML. Example:

' @team: Review this relationship—should we add a `soft_deleted` flag?

' @arch: Approved for v1.2. Will add in next sprint.

User "1" -- "0..*" Post : writes

🔹 Export Early, Export Often

We export DDL and Markdown after every major iteration. We have a schema/versions/ folder with v1.0.sql, v1.1.sql, etc. Perfect for rollback.

🔹 Pair with Visual Paradigm Desktop

For complex projects, we export the PlantUML to Desktop, reverse-engineer existing DBs, and generate SQL for MySQL or SQL Server.

🔹 Teach with Normalization Steps

We run a “Schema War Game” where juniors predict the next normalization step. The AI’s explanation wins every time.

Access, Licensing & Integration Notes (Our Team’s Setup)

| Aspect | Our Setup |

|---|---|

| Platform | Web-based via Visual Paradigm AI Toolbox |

| Licensing | Visual Paradigm Online Combo (required for AI features) |

| SQL Dialect | PostgreSQL (primary); Desktop edition for MySQL/SQL Server |

| Export Formats | DDL, Markdown, PDF, JSON, PlantUML |

| Team Collaboration | Git + Markdown + shared Playground links |

| Offline Use | Not needed—web version is fast and reliable |

💡 Pro Note: We’re upgrading to Teamwork Server for centralized model versioning and access control. Perfect for enterprise teams.

Conclusion: The Future of Database Design Is Collaborative, AI-Powered, and Human-Centric

After two months of real-world use, DBModeler AI has become a core part of our development workflow.

It’s not just faster—it’s smarter. It forces us to think critically about normalization, constraints, and edge cases. It democratizes database design across roles. And it reduces the risk of costly schema refactors by catching issues before they reach production.

The most valuable insight? AI doesn’t replace expertise—it elevates it. We’re not writing less code. We’re writing better code, faster, with more confidence.

If you’re tired of messy, undocumented, or broken schemas—if you want to design databases like a pro, without the steep learning curve—then DBModeler AI is not just a tool. It’s a game-changer.

Ready to Transform Your Database Workflow?

👉 Get Started with DBModeler AI

No installation. No setup. Just type your idea and build a production-ready schema in minutes.

References

- DB Modeler AI | AI-Powered Database Design Tool by Visual Paradigm: Official feature page detailing capabilities, use cases, and integration options for DBModeler AI.

- Mastering DBModeler AI by Visual Paradigm: In-depth tutorial and workflow walkthrough by a community expert, covering practical implementation strategies.

- DBModeler AI Tool Page: Interactive tool landing page with FAQs, feature highlights, and direct access to the AI generator.

- DBModeler AI Release Notes: Official update logs, new feature announcements, and version history from Visual Paradigm.

- DBModeler AI Database Generator Overview: Concise summary of the tool’s value proposition and 7-step workflow.

- Hospital Management System with DBModeler AI: Real-world case study demonstrating end-to-end database design for a healthcare domain.

- Visual Paradigm AI Toolbox – DBModeler AI App: Direct entry point to launch the web-based DBModeler AI application.

- DBModeler AI Video Walkthrough: Official video tutorial showcasing the interface, workflow, and key features in action.

- Free AI Use Case Diagram Analyzer Release: Context on Visual Paradigm’s broader AI toolbox ecosystem and access instructions for Online users.

- Desktop Integration Tutorial: Video guide on connecting DBModeler AI outputs with Visual Paradigm Desktop for advanced export and reverse-engineering workflows.

✅ Final Thought:

The best databases aren’t built in isolation. They’re co-created—by product, engineering, and AI.

With DBModeler AI, that collaboration is finally seamless.

Start building better data foundations—today.