The landscape of business process management is undergoing a profound transformation. For over a decade, Business Process Model and Notation (BPMN) has served as the universal language for describing workflows across industries. It provided a standardized way to map out complex operations, ensuring clarity between business stakeholders and technical developers. However, the integration of artificial intelligence (AI) and advanced automation technologies is pushing these standards beyond their original static definitions. We are witnessing a shift from static diagrams to dynamic, intelligent models that learn and adapt.

This guide explores the technical evolution of BPMN standards in the context of modern automation. We will examine how machine learning, process mining, and semantic enrichment are altering the way processes are modeled, executed, and governed. The goal is to provide a clear understanding of where current standards stand and where they are heading without relying on specific vendor implementations.

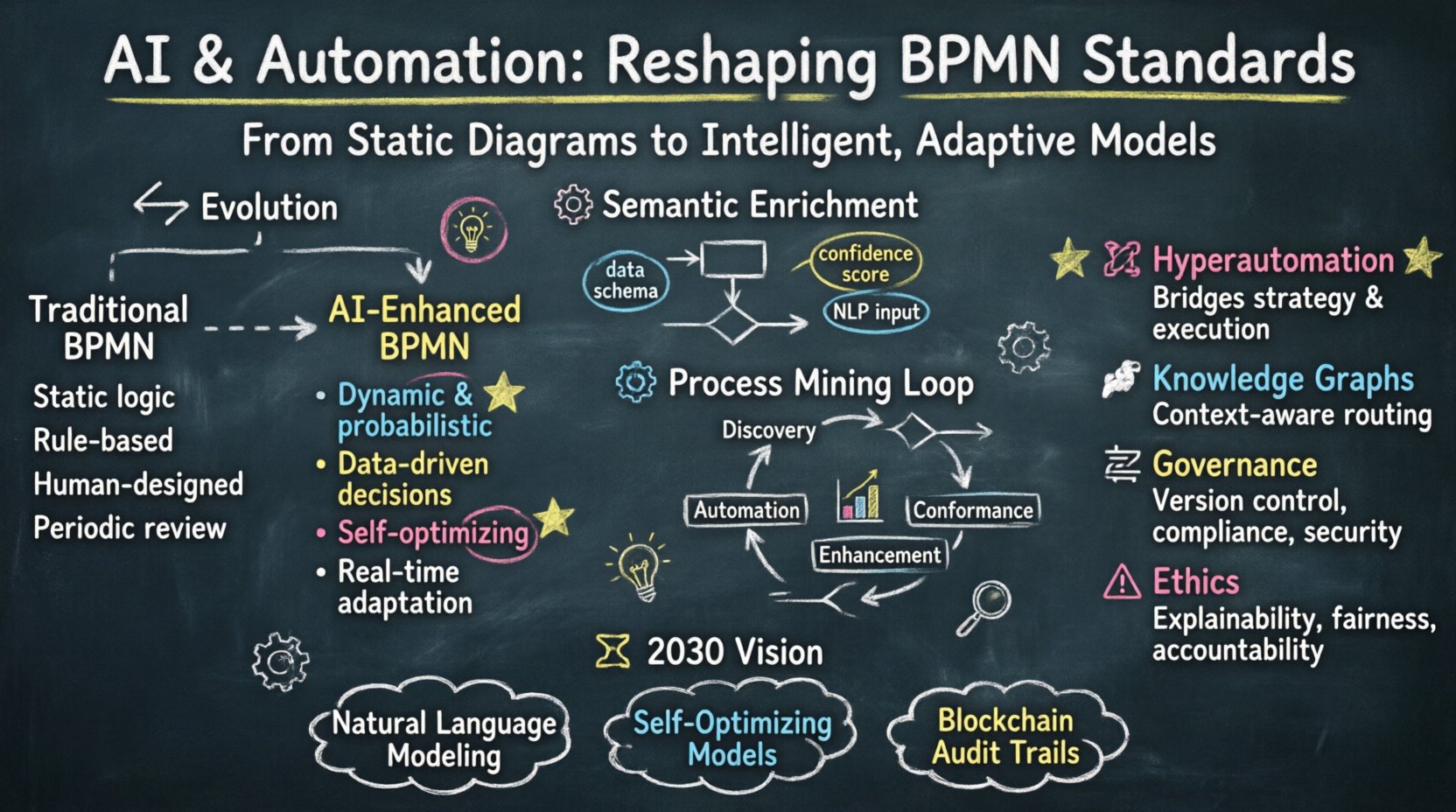

📉 The Evolution of Process Modeling: From Static to Dynamic

Traditionally, BPMN 2.0 focused on the representation of processes that were largely deterministic. A sequence flow indicated a specific path from one task to another, and gateways managed branching logic based on predefined conditions. While effective for stable environments, this approach struggled with variability and unpredictability inherent in modern business operations.

The introduction of AI introduces variability into the modeling layer itself. Instead of hardcoding every decision path, models now incorporate probabilistic elements. This shift requires the underlying standards to accommodate data-driven decision points rather than purely logical ones.

- Legacy Approach: Human designers define every step. Logic is fixed at design time.

- Modern Approach: AI algorithms determine the next best step based on real-time data.

- Standardization Challenge: How do we represent a probabilistic flow in a diagrammatic notation?

Process definitions are no longer just documentation; they are executable contracts that interact with external data sources. This necessitates a rethinking of how connectors, events, and tasks are defined within the specification.

⚙️ AI Integration in Modeling: Semantic Enrichment

One of the most significant impacts of AI on BPMN is the move toward semantic enrichment. Traditional symbols, such as a “Task” or a “Gateway,” have generic meanings. In an AI-enhanced environment, these symbols carry additional metadata that describes their behavior, performance metrics, and learning capabilities.

Consider the concept of a “Service Task.” In the past, this might simply denote an API call. Today, that task might represent a machine learning model inference service. The standard must support attributes that describe input data types, confidence scores, and fallback mechanisms if the model fails.

Key areas of semantic evolution include:

- Data Context: Tasks now require explicit definitions of the data schemas they consume and produce to enable downstream automation.

- Intent Recognition: Gateways may evolve to include natural language processing (NLP) capabilities, allowing them to interpret unstructured text inputs.

- Adaptive Logic: Decision points may utilize predictive analytics to route processes based on likelihood rather than binary conditions.

This enrichment allows process models to become more than visual representations; they become living documents that machines can interpret directly for execution and optimization.

🤖 Automation and Hyperautomation

Automation technologies, ranging from Robotic Process Automation (RPA) to intelligent orchestration platforms, demand higher fidelity from process models. The term “hyperautomation” describes the combined use of multiple technologies to automate as many business and IT processes as possible. For BPMN to support this, it must bridge the gap between high-level business strategy and low-level technical execution.

Automation bots often require precise instructions that BPMN provides through its executable nature. However, as automation becomes more autonomous, the distinction between “design” and “execution” blurs. Models must support continuous deployment and self-healing mechanisms.

Key automation capabilities influencing standards:

- Event-Driven Architectures: Processes must react to events in real-time, requiring BPMN to better support asynchronous messaging and event triggers.

- Human-in-the-Loop: Automation does not replace humans; it augments them. Standards must clearly define when a process requires human intervention and how that intervention is captured for auditability.

- Orchestration Complexity: Managing multiple microservices and legacy systems requires a notation that can handle distributed transactions and complex error handling without becoming visually cluttered.

📊 Process Mining and Data Feedback Loops

Process mining extracts knowledge from event logs to discover, monitor, and improve real processes. This technology creates a feedback loop where the actual execution data informs the model. BPMN standards must accommodate the integration of these logs to ensure that the model reflects reality, not just theory.

When process mining identifies deviations, the standard should support the versioning and updating of the BPMN diagram to reflect these findings. This creates a cycle of continuous improvement where the model evolves alongside the business.

The relationship between models and data looks like this:

- Discovery: Mining algorithms analyze logs to find the actual flow.

- Conformance: The discovered flow is compared against the BPMN model to find deviations.

- Enhancement: Predictive analytics use the model to forecast future process behavior.

- Automation: The refined model drives automated execution with tighter controls.

This feedback loop requires the notation to support metadata that links specific tasks to specific data entities found in the logs. Without this linkage, the model remains an abstract concept disconnected from operational reality.

🧠 Semantic Enrichment and Knowledge Graphs

To support advanced AI, BPMN is increasingly interacting with knowledge graphs. These graphs map the relationships between entities, such as customers, orders, and products, providing a rich context for process execution. Integrating knowledge graphs into BPMN allows processes to understand the “why” behind a decision, not just the “how”.

For example, a process might check a knowledge graph to determine if a customer is high-risk before approving a transaction. This requires the BPMN model to reference external ontologies. The standard must define how these references are structured and validated.

Benefits of knowledge graph integration:

- Contextual Awareness: Processes can access broader business intelligence during execution.

- Dynamic Routing: Paths can change based on real-time entity relationships.

- Interoperability: Standardized ontologies allow different systems to understand process data consistently.

⚖️ Governance and Standardization Challenges

As standards evolve, governance becomes critical. The Object Management Group (OMG) and other bodies oversee BPMN, but rapid technological change often outpaces formal standardization. Organizations must balance adherence to established norms with the adoption of new capabilities.

Key governance areas include:

- Version Control: Managing changes to models that affect legacy systems and new deployments.

- Compliance: Ensuring automated processes adhere to regulatory requirements, especially when AI makes decisions.

- Security: Protecting the data flows defined within the model from unauthorized access.

Organizations need a governance framework that allows for agile updates to BPMN standards without sacrificing stability. This often involves creating internal extensions to the base standard that can be validated against core compliance rules.

🔮 Future Scenarios for 2030

Looking ahead, several scenarios are plausible for the next decade. Process models may become self-generating, created automatically from natural language descriptions. This would democratize process modeling, allowing business users to define workflows without technical knowledge.

Another scenario involves the rise of “Cognitive BPMN.” In this model, the diagram itself contains the logic for machine learning training. The visual elements would not just represent steps but also represent the training data required for those steps.

Potential future developments:

- Natural Language Modeling: Users describe a process in text, and the system generates the BPMN diagram.

- Self-Optimizing Models: Processes automatically reconfigure themselves to minimize cost or time based on performance data.

- Blockchain Integration: Immutable records of process execution stored on distributed ledgers for maximum auditability.

⚠️ Ethical Considerations in Automated Processes

As automation becomes more autonomous, ethical considerations become part of the modeling standard. Bias in AI algorithms can lead to unfair process outcomes. The BPMN notation may need to include specific markers for ethical decision points where human oversight is required.

Transparency is key. Stakeholders must understand why a process took a certain path. This requires the model to be auditable, explaining the reasoning behind automated decisions.

Important ethical factors:

- Explainability: Models must support the generation of explanations for decisions made by AI components.

- Fairness: Automated routing must be tested for bias against different demographic groups.

- Accountability: Clear lines of responsibility must be defined in the process model for automated actions.

📋 Comparison: Traditional vs. AI-Enhanced BPMN

To summarize the differences between current standards and future requirements, we can look at a comparison of key attributes.

| Attribute | Traditional BPMN | AI-Enhanced BPMN |

|---|---|---|

| Logic Type | Static, Rule-Based | Dynamic, Probabilistic |

| Data Usage | Structured Inputs | Structured & Unstructured Data |

| Execution | Human-Driven Workflow | Autonomous Orchestration |

| Optimization | Periodic Review | Real-Time Adaptation |

| Complexity | Visual Clarity | Semantic Depth |

This table highlights the shift from a visual documentation tool to a functional, intelligent engine. The notation is becoming more abstract in appearance but richer in capability.

🛠️ Implementation Strategies for Organizations

Organizations looking to adopt these changes should not attempt to overhaul their entire process architecture overnight. A phased approach is necessary to ensure stability.

- Assess Current Maturity: Determine if existing processes are stable enough for automation. If the process changes daily, automation will struggle.

- Start with Hybrid Models: Combine static BPMN with AI components for specific decision points rather than replacing the whole model.

- Invest in Data Quality: AI models are only as good as the data they train on. Ensure event logs are clean and consistent.

- Train Teams: Process analysts need skills in data science and AI, not just modeling. Cross-functional teams work best.

🔗 Final Thoughts on the Trajectory of Standards

The future of BPMN is one of integration and intelligence. It will not disappear but will evolve to support the complex, data-driven environments of modern enterprise. By embracing semantic enrichment, process mining, and ethical governance, the standard will remain relevant and powerful.

Stakeholders must remain vigilant. As technology advances, the definition of a “process” changes. It is no longer just a sequence of tasks but a continuous stream of value creation driven by data and intelligence. Keeping pace with these changes requires a commitment to continuous learning and adaptation.

For organizations, the opportunity lies in leveraging these new capabilities to create more resilient and responsive operations. The standards will provide the framework, but the success depends on how effectively they are applied to real-world challenges.