Designing robust transactional workflows requires more than standard modeling. When systems process thousands of operations per second, the nuances of the Business Process Model and Notation (BPMN) become critical. This guide explores advanced patterns specifically tailored for high-volume environments. We focus on structural integrity, concurrency management, and performance optimization without relying on specific vendor tools.

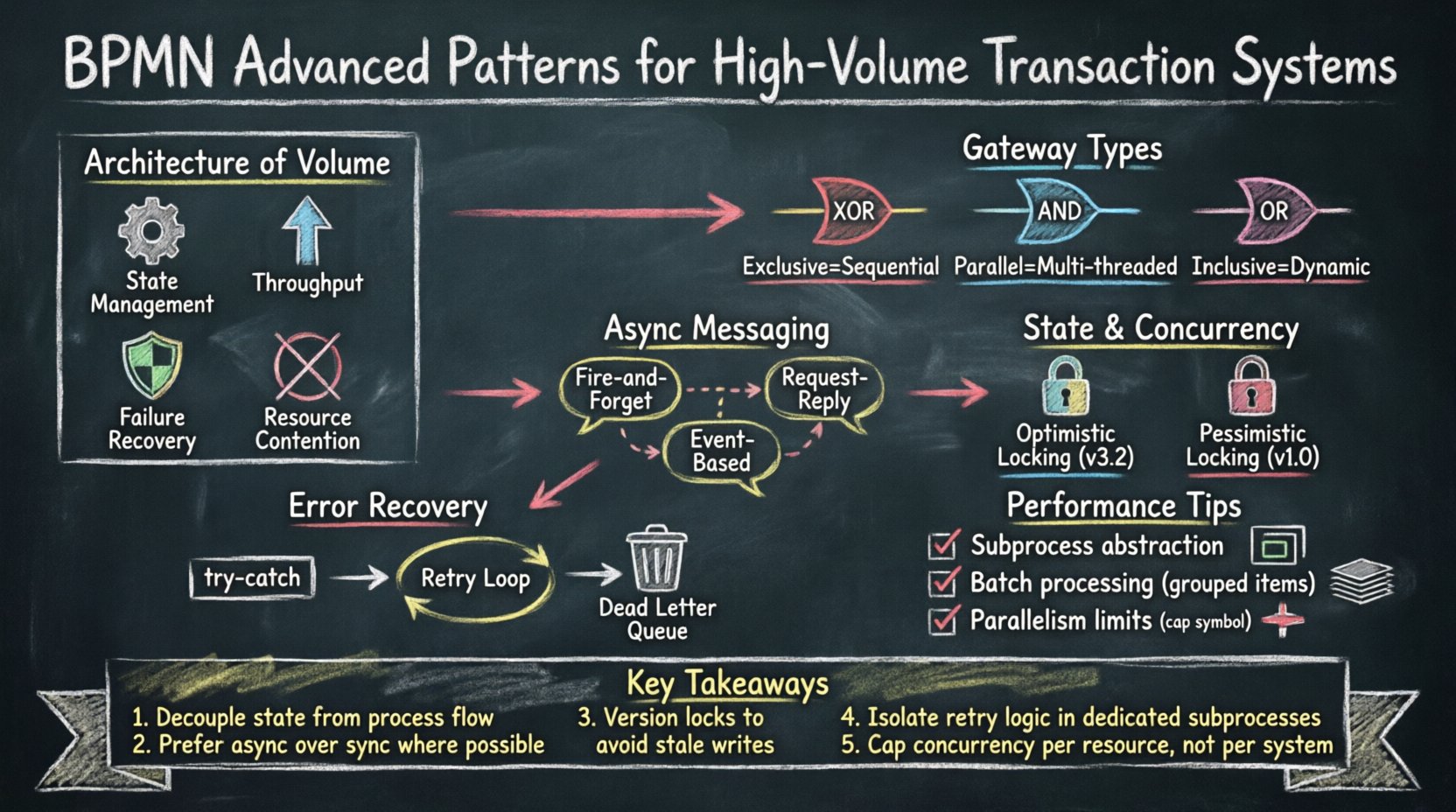

📊 The Architecture of Volume

High-volume transaction systems differ fundamentally from standard operational workflows. In a typical business process, latency is acceptable, and human intervention is common. In a transactional engine, milliseconds matter, and automation must be absolute. The process model acts as the blueprint for concurrency control and resource allocation.

When scaling to millions of records, several factors shift the priority of design:

- State Management: Every step in the process must maintain data integrity.

- Throughput: The model must allow parallel execution where logically safe.

- Failure Recovery: Rollback mechanisms must be explicit and recoverable.

- Resource Contention: Locking strategies affect how many processes can run simultaneously.

Modeling these constraints requires a shift from linear thinking to distributed logic. Standard BPMN elements function differently under load. Understanding these behaviors allows architects to build systems that remain stable during peak demand.

🔀 Gateway Mechanisms at Scale

Gateways dictate the flow of control. In high-volume systems, the choice of gateway impacts performance significantly. Incorrect usage can create bottlenecks where all threads must wait for a single condition, negating parallelism.

Three primary gateway types require careful selection:

- Exclusive Gateways: Route to one path based on data. Low overhead, but sequential decision making.

- Parallel Gateways: Spawn multiple paths simultaneously. High throughput, but requires synchronization.

- Inclusive Gateways: Route to multiple paths based on conditions. Complex state tracking required.

| Gateway Type | Concurrency Impact | Best Use Case |

|---|---|---|

| Exclusive Gateway | Low (Sequential) | Simple Decision Logic |

| Parallel Gateway | High (Multi-threaded) | Independent Validation Steps |

| Inclusive Gateway | Medium (Dynamic) | Conditional Feature Flags |

For transactional systems, Parallel Gateways are often preferred for splitting work, provided the downstream processes are independent. If downstream processes share a resource, such as a database record, the model must include synchronization logic. Without this, race conditions occur, leading to data corruption.

📨 Asynchronous Messaging Patterns

Blocking operations reduce throughput. If a process waits for an external system to respond, the entire transaction thread is occupied. Asynchronous messaging decouples the process from the response time of dependent services.

This pattern utilizes Intermediate Message Events. Instead of waiting for a reply before continuing, the process sends a signal and moves to a waiting state. This allows the engine to process other transactions while the original one waits for confirmation.

- Fire-and-Forget: Send data without expecting an immediate response. Use when the action is non-critical.

- Request-Reply: Send a message and wait for a specific correlation ID. Use when data consistency is required.

- Event-Based: Listen for external events to trigger the next step. Use for decoupled microservices.

Implementing this requires a reliable message broker. The process model must handle cases where messages are lost or delayed. Timer events often accompany message events to prevent indefinite waiting. If a message does not arrive within a set timeframe, the process should trigger a retry or alert mechanism.

⚙️ Managing State and Concurrency

State management is the backbone of transactional consistency. In a distributed environment, a process instance represents a specific unit of work. Managing the state of this unit ensures that no two processes corrupt the same data.

Key considerations include:

- Optimistic Locking: Allow multiple processes to read data. Update only if no other process modified it since the read.

- Pessimistic Locking: Lock the data immediately upon access. Prevents other processes from reading or writing.

- Versioning: Attach version numbers to data objects. Verify the version before committing changes.

The process model should reflect these locking strategies. If a task requires a lock, the BPMN diagram should show a Task node that performs the locking operation. This makes the constraint visible to developers and auditors.

Long-running processes present unique challenges. If a transaction takes hours, the engine must persist state. Intermediate events and Message Intermediate Events help break long tasks into manageable chunks. This prevents memory exhaustion and allows the system to recover from crashes without losing progress.

🛡️ Compensation and Error Recovery

Failures are inevitable in high-volume systems. The process model must define how to handle these failures explicitly. Standard error handling involves exceptions. In BPMN, this involves Error Intermediate Events and Boundary Events.

Compensation is the act of undoing work. If a transaction fails halfway through, the system must revert changes to maintain data integrity. This is distinct from simple rollback. Compensation allows for partial reversals.

Effective error handling patterns include:

- Try-Catch Blocks: Encapsulate a section of the process. If an error occurs, route to the compensation handler.

- Retry Loops: Attempt the action a set number of times before escalating.

- Dead Letter Queues: Move failed transactions to a separate queue for manual review.

| Strategy | Complexity | Recovery Capability |

|---|---|---|

| Immediate Retry | Low | Temporary Network Glitches |

| Exponential Backoff | Medium | System Overload |

| Compensation Handler | High | Business Logic Errors |

When designing compensation handlers, ensure they are idempotent. Running the compensation logic twice should not cause further errors. This is crucial because the error event itself might be triggered multiple times if the system restarts.

📈 Performance Tuning via Modeling

Optimization begins at the design phase. A well-structured model reduces runtime overhead. Several modeling techniques directly influence performance metrics.

Subprocess Abstraction

Using Subprocesses helps manage complexity. A collapsed subprocess hides internal details, reducing the cognitive load on the engine when traversing the diagram. However, expanded subprocesses allow for detailed debugging. For high-volume systems, keep complex logic in separate subprocesses. This isolates failures and allows for specific tuning of the internal logic.

Batch Processing

Processing records one by one is inefficient. Batch processing groups transactions. In BPMN, this is modeled using a loop structure. The process iterates over a collection of items, processing a set number before committing to the database. This reduces the number of database connections and transaction commits.

- Fixed Batch Size: Process exactly 100 items per commit.

- Time-Based Batch: Process items until 5 seconds have passed.

- Volume-Based Batch: Process items until the total size reaches a threshold.

Parallelism Limits

Unlimited parallelism can overwhelm system resources. The model should define concurrency limits. This is often handled by the execution engine, but the process design should respect these limits. Use Gateway thresholds to cap the number of parallel paths. For example, limit the number of validation tasks running at once to prevent CPU saturation.

🔍 Monitoring and Optimization

Once the system is live, monitoring is essential. The process model should include markers for key metrics. These markers help identify bottlenecks in the actual execution.

Key metrics to track include:

- Duration: How long each task takes.

- Throughput: How many instances complete per hour.

- Error Rate: The percentage of instances that fail.

- Queue Depth: How many instances are waiting for resources.

By correlating these metrics with the process diagram, teams can pinpoint exactly where delays occur. Is it the database write? Is it the external API call? The model serves as the map for these diagnostics.

🔒 Security and Compliance

High-volume systems often handle sensitive data. Security controls must be embedded in the process flow. Authentication and Authorization tasks should be explicit nodes in the diagram.

- Access Control: Ensure only authorized users can trigger specific tasks.

- Data Masking: Apply masking rules before data is passed to external services.

- Audit Trails: Log every state change for compliance purposes.

Compliance requirements often dictate specific ordering of operations. For instance, data encryption must occur before storage. BPMN allows these constraints to be visualized. This ensures that regulatory requirements are met without relying on developer memory.

🔄 Continuous Improvement

Process models are not static. As business rules change, the model must evolve. Versioning the process definition is critical. This allows the system to run older versions while deploying new ones.

- Migration: Define how instances created under version 1 behave under version 2.

- A/B Testing: Run different process versions on subsets of traffic to compare performance.

- Feedback Loops: Use data from production to refine the model.

Regular reviews of the process model ensure it remains aligned with system capabilities. If a bottleneck is identified, the model can be adjusted to distribute load more evenly. This iterative approach maintains system health over time.

📋 Summary of Advanced Techniques

Implementing BPMN for high-volume transaction systems requires a shift in mindset. It is not just about drawing boxes and arrows. It is about modeling concurrency, state, and failure. The patterns discussed here provide a framework for building resilient systems.

Key takeaways include:

- Use Parallel Gateways to maximize throughput where independence exists.

- Decouple external dependencies using Asynchronous Message Events.

- Implement Compensation Handlers for complex error recovery.

- Batch operations to reduce database overhead.

- Monitor metrics against the model to identify bottlenecks.

By adhering to these patterns, architects can create process models that scale. The model becomes a reliable specification for the execution engine, ensuring that high-volume transactions are handled with precision and stability.

This post is also available in Deutsch, Español, فارسی, Français, English, Bahasa Indonesia, 日本語, Polski, Portuguese, Ру́сский, Việt Nam, 简体中文 and 繁體中文.